Market researchers are being squeezed from both sides. Businesses are making decisions faster than research teams can deliver answers. A stakeholder submits a request, a team scopes, fields, cleans, and delivers three to six weeks later. By then, the decision has already been made.

At the same time, many of the tasks that used to define a researcher's value — designing surveys, cleaning data, summarizing open-ends and data analysis — can now be done by AI in minutes.

Researchers are caught between a function that moves too slowly and a role that's being redefined underneath them by AI.

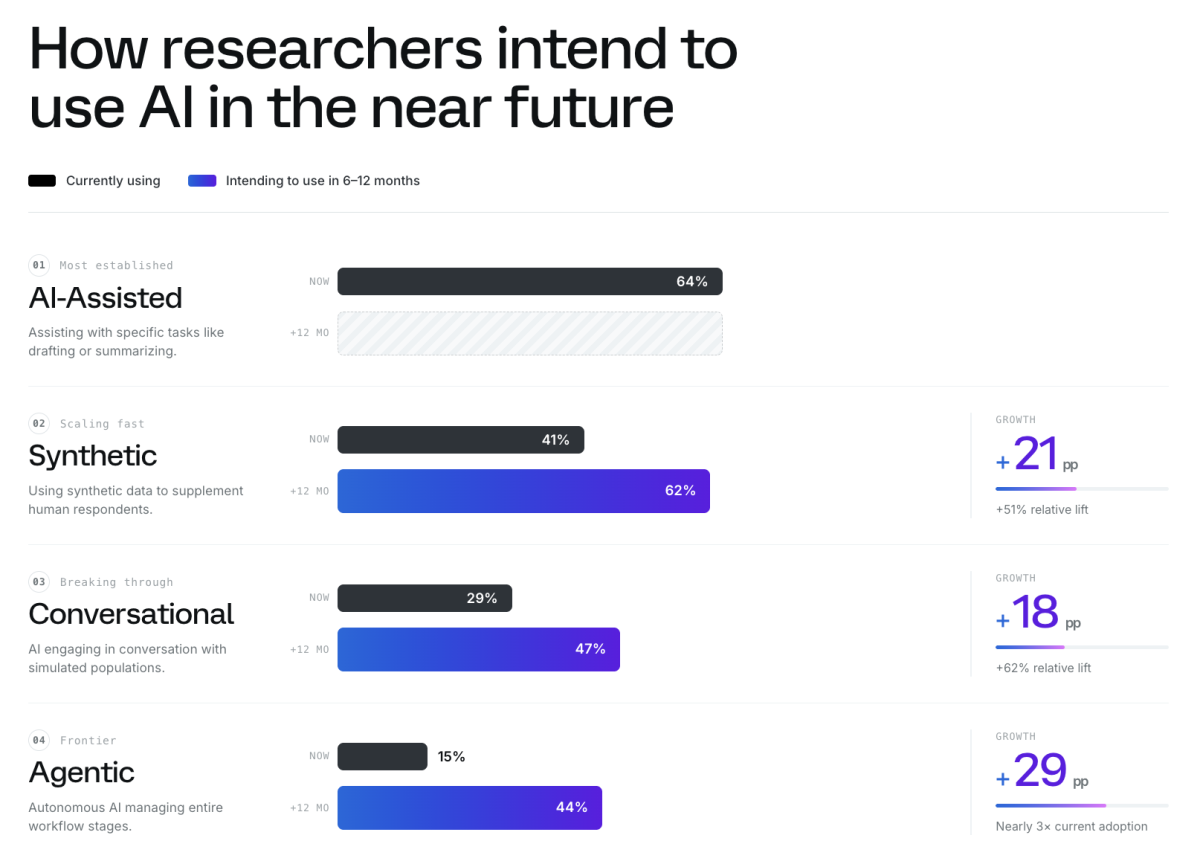

It’s not that research teams aren’t trying to keep pace with AI. More than ninety-five percent of market research professionals are already using it, for discrete tasks like drafting questions, summarizing findings, cleaning data.

But the shift that matters is just ahead. In the next six to twelve months, adoption of agentic research systems is set to nearly triple, from 15% to 44%, and synthetic data usage is projected to jump from 41% to 62%.

This shift is rebuilding how research works entirely, creating an always-on intelligence system where AI orchestrates the full lifecycle and researchers do what only humans can: find the signal, build the story, and drive the outcome.

The researcher's real value is moving upstream, spotting what matters in a flood of AI-generated output, building the story around it, and making the organization act. The AI handles the workflow. The researcher makes sure it leads somewhere worth going.

Market research in the agentic era

Last month at IIEX North America, Qualtrics shared our vision for what we're building toward: AI agents designed to close the gap between understanding and outcome by taking researchers from a business question to a decision-ready answer in minutes.

Imagine a scenario where a CMO asks what consumers think about a new category. Instead of a request sitting in a queue while a team scopes, fields, cleans, and delivers intel weeks later, the question triggers an agent that designs the study, fields synthetic and human panels, and delivers answers in a fraction of the time.

The agents Qualtrics is developing will transform every stage of how research gets done. Here’s what each stage looks like.

1. Start with a plan

The agent translates a business objective or question into a structured research plan, recommending the right methodology, tools, and sequence automatically. The researcher reviews and refines rather than building from scratch.

2. Design with rigor built in

Through a conversational interface, the agent guides study design from a blank page to a sound starting point in minutes. Complex approaches like MaxDiff and conjoint analysis, which have traditionally required specialist consultants or research PhDs, are embedded in the workflow, available to any team that needs it. The specialist knowledge doesn't disappear. It becomes part of the system.

3. Field across human and synthetic audiences

The agent fields to the right audience using both traditional and synthetic methods, matching the approach to the question rather than defaulting to one mode.

4. Surface what matters

Once findings are in, the agent delivers key takeaways and actionable recommendations, oriented toward the business decision that triggered the work. The output tells the team what to do about it, not just what the data says.

5. Draw on everything you've ever learned

Underneath all of it, years of institutional research stops sitting in folders and starts working. When a business question comes in, the agent draws on everything the organization has ever learned to surface an answer that's ready to act on.

When the research library comes up short, the agents don't just flag the gap and stop. They connect directly to Qualtrics synthetic panels, AI-modeled audiences fine-tuned on validated human survey data, to generate directional insights while full research is being scoped. Teams get an answer fast, grounded in data, with the option to go deeper if the question warrants it.

In the agentic era, instead of being buried in execution, researchers will drive strategy with agents handling the methodology, study design, and synthesis work that used to consume weeks. The researcher shifts from gatekeeper to governor, setting the standards the system upholds

This opens up a whole new operating model where teams use synthetic to explore directional answers quickly, deepen understanding through human research, and scale decisions with confidence.

What this looks like in practice

When Navy Federal Credit Union needed to answer strategic questions about technology adoption and fintech interest, they faced the classic constraints: long panel timelines, subpopulation access challenges, and a business that needed answers faster than traditional fielding could deliver. Using Qualtrics synthetic panels, they completed the research in under 4 hours versus 5 days, with key findings consistent across synthetic and human samples and representational fidelity within ±5% of their member base.

Booking.com ran a psychographic segmentation study using synthetic panels to inform more personalized social creatives. Synthetic responses maintained focus across the sustained questioning that rigorous segmentation demands, producing the same degree of variance within segments as a human sample — validated through parallel human and synthetic studies — and delivered the creative strategy insight to the team faster than a human-only approach would have allowed.

What to look for as agentic AI reshapes research

The shift toward AI agents in research is real, but so is the noise. A lot of what's being positioned as an "AI research agent" today is task automation wearing a larger label. As you evaluate what's worth investing in, a few questions cut through it.

Does rigor travel with access?

Agents that broaden research access are only valuable if methodology comes along. A system that lets anyone run a study without guardrails is distributing the risk of bad research more broadly.

Does the system know what it doesn’t know?

Any AI working with institutional knowledge will hit gaps. The question is whether it flags those gaps and routes appropriately, or fills them with confident-sounding outputs that aren't grounded in real data.

What's the synthetic model actually trained on?

The same scrutiny applies to synthetic panels and personas, which will become increasingly central to how agentic research systems close research gaps quickly. Most general-purpose LLMs give you the same narrow set of answers over and over that don't reflect how human populations actually respond. The standard is synthetic data that produces the same decisions you'd reach with human data. Research-grade outputs need research-grade training data, which is the approach we’re taking at Qualtrics.

Does it connect insight to action?

A system that produces faster findings but leaves the "so what" to the researcher hasn't solved the real problem. The distance between insight and action is where most research value gets lost.

These are design choices that determine whether AI-assisted research produces outputs worth acting on, or outputs that look credible until they’re not.

The researcher’s moment

Research has spent decades earning its seat at the table. Agentic AI re-sets the table entirely, giving the function the speed and power to close the gap between understanding and outcome, so that every decision across the organization runs through the intelligence researchers built.

The researchers who thrive will be the ones who become architects of that system: setting the standards, shaping the questions, and making sure that faster research also means better decisions.

The expertise doesn't get replaced. It gets scaled.