What is ad testing?

Ad testing is simply the process of vetting your ad concepts with a representative sample of your market. It establishes an ad’s effectiveness based on consumer responses, feedback, and behaviour. You can test multiple ads, a complete ad, or even portions of your ad to make sure your ads will resonate with your target audience.

Why test your ad?

No matter how much money you throw at your advertising campaign, which celebrity endorses your product, or how many expensive special effects it includes, if it doesn’t resonate with, and influence your target audience, it’s wasted ad spend.

Ad testing has several tangible benefits. Consistent pre-testing improves an ad’s effectiveness by at least 20%, according to Millward Brown. Not only that, an ad’s content and creativity influenced profits four times more than media placement alone, according to research in AdMap.

“I’ve certainly got enough evidence, real hard evidence, showing that ads we’ve pretested perform better in the marketplace than ads we don’t. It’s inarguable proof.”

– Keith Weed, Chief Marketing and Communications Officer, Unilever

Advertising is often one of the primary tactics in a marketer’s plan. The planning, production, marketing communications, and media costs can all add up. Particularly if you want your campaign to reach far and wide. Ad testing is how you make sure ad spend is worth it.

As you look to maximise the ROI of your adverts, ad testing can help make sure your advertisement resonates with your target audience, leading to a better conversion rate and helping to cement your brand and boost the positive associations that come with it.

Get started with our free ad testing survey template

How do you test advertising effectiveness?

Quite simply, with market research:

- Take a pre-selected segment of your audience that represents the target group for your campaign, such as people in a particular age group, those in specific job roles, or a combination of your preferred criteria.

- Show respondents the ad – different versions of the same advert, or different ad concepts

- Survey respondents to find out what they thought of it

- Test before, during, and after launch Pre-testing ad concepts can help you make sure your campaign starts on the right note and avoid costly pitfalls. In-flight monitoring of a campaign shows you the performance arc across its lifecycle. This helps you pinpoint where conversions occurred and how sentiment and purchase decisions evolve over time in response to your ad.

Create compelling ad concepts

The first step to testing ads is coming up with great concepts, but crafting the perfect ad can be a daunting task. The following guidelines can help you lay the foundation for a winning ad.

Keys to ad success

- Focus on what makes you different. Showcase your value and uniqueness in your ad to stand apart from other ads and your competition.

- Include a clear call to action. Your ad should have a purpose, whether that’s driving traffic to your website, learning more about your products and solutions, or a direct sale. Make your call to action clear and unmistakable.

- Design visually interesting ads. Interesting images will capture attention, increasing the likelihood of customers engaging with your ad.

- Keep your message clear. Including a clear, simple message helps you better communicate your ideas and engage with your audience. Just because your product has various benefits doesn’t mean they all need to be mentioned in the same advert.

- Understand international nuances. To help your ad land, be cognisant of language differences. In software, your call to action might be “Request Demo” in the United States, but “Book Demo” in Australia.

- Use the wisdom of the crowd. Coming up with amazing ad concepts on your own might be daunting. If you don’t have an in-house agency or creative team, spread the creative burden across your organisation by asking each person to come up with a few ideas. You’ll find hidden gems.

- Know your audience. The way you would engage with your customers may be different. For B2B, you may lead with an emotional appeal for executives, but lead with a more data, outcomes-focused appeal for practitioners.

Pitfalls to avoid

- Assuming you know your market. The way marketing teams talk about products or solutions may not match the way customers talk about your products and services. This is one of the most important reasons to validate your ads before launching. You may even consider some preliminary research to better understand your market when designing ads.

- Using language that is too salesy. Enticing your market to engage with your ad is the goal, but using language that is too pushy may turn off potential customers.

- Designing distracting ads. There’s a fine line between catching someone’s eye and being over the top. Always go for being clear over clever.

- Sending mixed call-to-action signals. Best practice is to use a single call to action like, learn more, or book now. When you give multiple calls to action, your consumer may not know what to do and disengage with your ad.

- Not connecting your ad with your brand. You want to ensure that your consumers can identify your ad with your brand – it’s not uncommon for consumers to see an ad and associate it with your competitors, so make sure you’re not spending your ad budget giving them a boost. One mattress company, for example, realised that they neglected to mention their company name during the first 30 seconds of their ad. A simple script change massively improved their ad’s effectiveness.

Learn about our ad testing tool

Define your goal

First things first: What do you want your ad to achieve? Is it raising awareness of a new product? Driving more sales of an existing product? Promoting your brand? This desired outcome is known as the ‘advertising effect’, and it’s a key ingredient to include in your survey questions.

To test your ad’s performance, you first need to have a clear picture of what success will look like.

Test before, during, and after launch

Pre-testing ad concepts can help you make sure your campaign starts on the right note and avoid costly pitfalls. In-flight monitoring of a campaign shows you the performance arc across its lifecycle, helping you pinpoint where conversions occurred and how sentiment and purchase decisions evolve over time in response to your ad.

To test an ad concept, you should work with a pre-selected segment of your audience that represents the target group for the campaign. For example, people in a particular age group, those in specific job roles, or a combination of qualifying criteria.

This group can then be surveyed to see how they respond to different versions of an ad, or different ad concepts.

Determine which ad testing methodology to follow

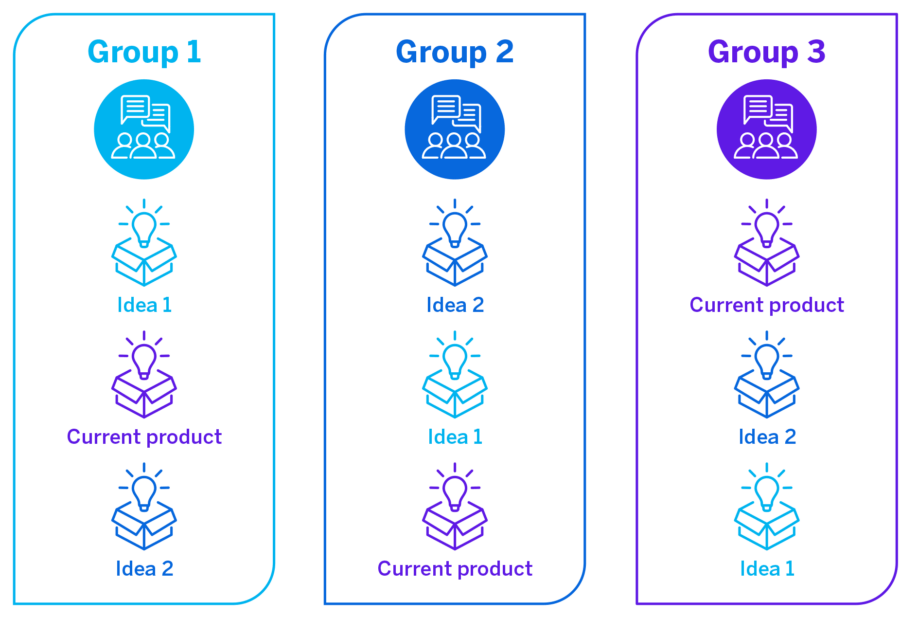

There are several different ways to test ads. Two of the most common market research methodologies are single ad group testing (monadic) and multi ad group testing (sequential monadic).

Single ad group testing: each group of research participants is shown one ad or ad concept in isolation:

Multi ad group testing: ad ideas are still shown to groups of respondents in isolation, but each group goes through variations of the questions so that they see all product ideas:

Each method you use to test ads has tradeoffs:

| Single ad test | Multiple ad test | |

|---|---|---|

| Pros |

|

|

| Cons |

|

|

Question design

There are several questions you will want to ask in your ad testing survey about each ad:

- Initial reactions: This will help you know if your ad will stand out.

- Appeal, believability, relevance, and clarity: These questions will help you ensure your messaging is correct.

- Likelihood to purchase: after seeing the ad.

- Overall feedback in an open text field.

As well as collecting all the metrics about opinions and reactions, be sure to add a few fields that request age bracket, gender, profession, and any other demographic metrics you’re interested in. This information could help you to target your ad towards the right people once it has been created.

What style of questions should you use?

Use a mix of question styles. Make sure the statements are written in a neutral tone of voice, without any more words than necessary, and avoid emotive or descriptive terms that might bias the response.

For your ad test, you’ll be dealing with ideas or constructs that range from positive to negative, such as:

How appealing is the ad?

How believable is the ad?

How relevant is this ad to you personally?

Here are some question types and tactics to keep in mind:

- A 5-point Likert scale: (strongly agree / agree / neutral / disagree / strongly disagree) is a good way to ask these types of questions since it gives you comparable, specific results without too much respondent effort. A scale also avoids the agreement bias that can be introduced through yes/no questions.

- Open text fields: Include one or two of these for respondents to fill in with their own words. Text analysis software can gather and interpret text, giving insight into your respondents’ more nuanced feelings about your ad. For example: What is this ad saying to you?

- Radio buttons: Particularly useful for sounding out the clarity of your ad’s messaging, with options such as confused / unclear / didn’t understand. Where the respondent selects such an option, follow up with an open text field where they can elaborate in their own words on which part of the ad was confusing or wasn’t clear.

- Keep questions neutral: Don’t use any more words than necessary, and avoid emotive or descriptive terms that might bias the response.

- Capture demographic information: As well as collecting data about opinions and reactions, be sure to add a few fields that request age bracket, gender, profession, and any other demographic metrics you’re interested in at the end of the survey. This information could help you to target your ad towards the right people once it has been created.

Reporting and analysis

You’ll want to break your report into two different sections – overall findings and individual ad results. Your overall findings will give you an indication of which ads had the best initial reactions and are the most likely to drive sales. Individual ad analysis will allow you to dive deeper into the details of each individual ad to identify any weak spots.

If you have a lot of open-end comments, reviewing each comment one by one can be tedious and time-consuming. To make this process faster, a text analytics software like Qualtrics Text iQ can make quick work of your open text responses, allowing you to see both key topics and sentiment of your ads.

Additional ad testing research

You can further hone your ad concept by iterating it through multiple rounds of testing and tweaking it in response to what your audience is telling you. These iterative results are valuable in the longer term too – they can help steer your creative team away from ideas that weren’t successful in testing and focus them on what works well for a given audience.

Types of tests

There are various types of advertisement tests, falling into three broad categories:

Inform

Tests that gauge how effectively an ad performs on key communications metrics like Ad Recall, Service Attributes, and Communicating Benefits.

Persuade

Measures how effectively an ad changes perceptions and opinions through metrics like Persuasion, Personal Values, and Higher Order Values testing.

Convert

Tests the success of campaigns intended to drive actions, such as purchasing, using Response and Ad Effectiveness tests.

Advertising is a key part of your branding strategy. Learn how to track your brand awareness for optimum business outcomes.

How do you choose the right platform for ad tests?

Generally, whichever channel you run your ad on (social media, search engine with Pay Per Click (PPC), sponsored listings, etc) will come with built-in tools to quantify your ad’s performance in specific, measurable ways.

This is ‘what happened’ operational data (O-data) which reports factually on the interactions you care about. But this isn’t a joined-up solution. The ad that was a roaring success on Instagram is unlikely to work in the same way if you transplant it as-is to Facebook.

The more joined-up solution is to use experience data (X-data) that can unravel deeper meanings behind operational facts and figures. This is centred around the people you care about, rather than the things that happen. It’s all about the experiences they are having, what they value, what turns them off a purchase, and what drives their decisions. Data flows into a single platform through sentiment, rankings, and ratings, or in the customer’s own words through reviews and customer service conversations.

In the case of ad testing, the people you care about are likely to be your prospective customers, or the audience groups you’re targeting with your ads.

Get started with our free ad testing survey template