Ranking questions are an important asset in your survey designers’ toolbox, but they can be tricky to use effectively. Are you using this survey type correctly?

What are ranking questions?

Ranking questions are a survey question type aimed at getting respondents to order a list of answers into a ranked order, providing quantitative research data.

This question type allows respondents to identify which objects are most and least preferred. It contains a close-ended scale that allows for comparison of specific variations only.

An example of a ranking question is: “Rank each item in order of importance, with no.1 as the ‘most important’ item, to no.10 as the ‘least important’ item.”

Free eBook: Qualitative research design handbook

Survey ranking question vs rating questions

A common question is what is the difference between rating questions and ranking questions in surveys. Here’s a helpful breakdown of how to tell them apart:

Rating questions

In surveys, the most commonly used question types are rating scale questions. This is where respondents are asked to indicate their personal levels on things such as agreement, satisfaction or frequency.

Rating scale questions are best used when you want to measure your respondents’ attitude toward something. Questions that include “how much…” or “how likely…” are best when differentiation between desirable things is not necessary. E.g. To what extent do you agree with the following statement? (Strongly agree/agree/unsure/disagree/strongly disagree)

Rating scales are often not helpful in finding fine-grained data that researchers need to make decisions. For example, if you asked how much your respondents like particular dessert items, some might engage in satisficing (short-cutting by choosing any acceptable answer).

Ranking questions

If you’re asking your respondents about things that they find desirable, or if you want to see what is important to them, a ranking question will help provide you with a ranked set of preferences. This is particularly helpful when you want to understand your respondent’s real-world choices.

Ranking questions are also useful for forcing your respondents to choose between two things, unlike rating questions that want to know the attitude towards both items.

For example, while some people may like everything on the dessert menu (they may answer ‘like’ for all items on if it was a rating question), most will settle for whichever single dessert they prefer most (one item would be preferred over another with a ranking question).

Types and ranking questions examples

Here are some common examples of the kind of ranking questions you can ask in a survey:

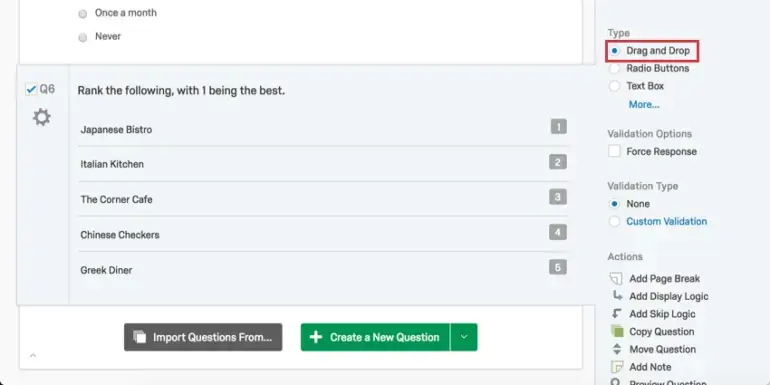

Drag and Drop ranking

Respondents drag items into their preferred order using this type of ranking question. This type is appropriate for shorter lists where you want your respondents to rank each item against each other.

Example:

Radio Button ranking

With the Radio Button type, your respondents choose a rank for each item from a list of possible rankings.

Example:

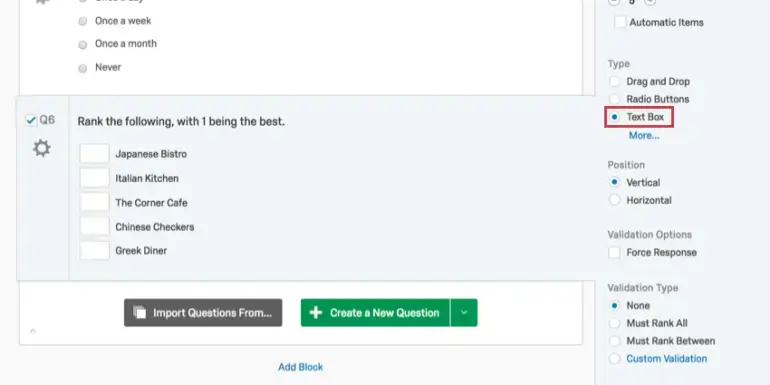

Text box ranking

With the Text Box type, your respondents type in their preferred ranking for the provided options. Respondents can be forced to rank all options, some options, or rule-based options through validation types.

Example:

Select box ranking

The Select Box type is an alternative to ‘Drag and Drop’ ranking. Your respondents select items and then rank them by clicking the arrows to move each item up and down.

Example:

Ranking question best practice

Follow our best practice tips to get you up and running with ranking questions:

Dos

Do limit your ranking options

Some people are good at identifying a few things they prefer and a few they don’t. When ranking a list, you can expect reasonably reliable rankings for the top three and bottom three items. However, the middle rankings tend to be much more noisy and unreliable.

Try to limit your ranking question options to between 6 and 10 items, and don’t read too much into the middle rankings. For example, in a list of 10 items, the difference between a ranking of 4 and 5 isn’t terribly strong or reliable for most people. Keeping this in mind when you design your rating tasks will help you not only design better questions, but also draw better insights from your data.

Do use broad categories to group items

If you have a large list of items that you would like to have ranked, consider using broad categories to group the items. For example, if you have a list of 35 product attributes, try to think of 4 or 5 categories that contain all of those attributes and ask your respondents to rank the items within each category and then rank the categories overall.

This approach of breaking large ranking tasks into smaller, more manageable tasks will result in a greater number of questions that each respondent must answer. However, the overall task will be much easier for your respondents. It will also provide you with a more detailed and insightful dataset, which will help you make better decisions.

Do pre-test ranking formats on different devices

Be sure to pre-test different types of ranking formats on different devices to see which is the best fit for your survey.

For example, drag and drop questions are likely easier for respondents who are using desktop computers than for respondents who are answering on a smartphone.

Do fairly group together items that are closely related together

It’s important when picking your answer list to consider the possible outcomes. If your respondent picks one option over another, the rest of the items are reduced in importance, so make sure this is a fair judgement by keeping the items related. If your respondent ranks an ice-cream of more importance than the President of the United States, this in context isn’t fair as the significance of each varies greatly.

Don’ts

Don’t create long lists of items for people to rank

Researchers often want to ask respondents to rank huge lists to see what people care most about. This is a good way to get bad data, as you’ll get a wide-spread of answers that won’t indicate true patterns or repeatable insights.

In addition, your respondents will not thank you for taking the excessive time needed to consider and rank each item against each other.

Don’t force an answer in your ranking question design

Sometimes, an item that you put in won’t be of relevance or known to your respondent. It’s unfair to commit them to rank this, as it will likely end up at the end.

Providing an ‘n/a’ (not applicable) option to cancel out a particular line item will mean that your data results are more accurate. This can put less pressure on your respondents.

Don’t use ranking questions if you’re looking for value-based answers

As you’re asking participants to rank items against each other, this does not provide insights on how they rate each item. If you’re looking for a value against each item, you’re better off using a rating question to ‘rate the importance of X’ on a 1-10 scale.

Another possibility is to follow up the ranking question with a rating question to evaluate the strength of the preference.

Learn more about survey question design

Depending on what you’re asking respondents and the device-types that respondents are using, we can help you implement ranking questions in a variety of ways.

Free eBook: Qualitative research design handbook